Dataflow - DataFlow Saudi

Recent Posts

- تمثال علي ونينو

- دعاء تمام السعي

- الرمز البريدي الاحساء

- مطوية عن تصنيف المخلوقات الحية علوم رابع

- سيارات

- معلومات عن كتاب لسان العرب

- الخطوة الأولى في الطريقة العلمية لحل المشكلات

- بيتزا رضا

- ما معنى الصمد في سورة الاخلاص

- علاج الكحة الناشفة كورونا

- Anime fire

- اخوتي الحلقه 27

- لو اجتمعت الانس والجن

- من يوم عرفتك وطعم النوم

- تأمين التعاونية الطبي vip المستشفيات

- الشيف عدنان يماني وزوجته

- اغنيه برنامج سين

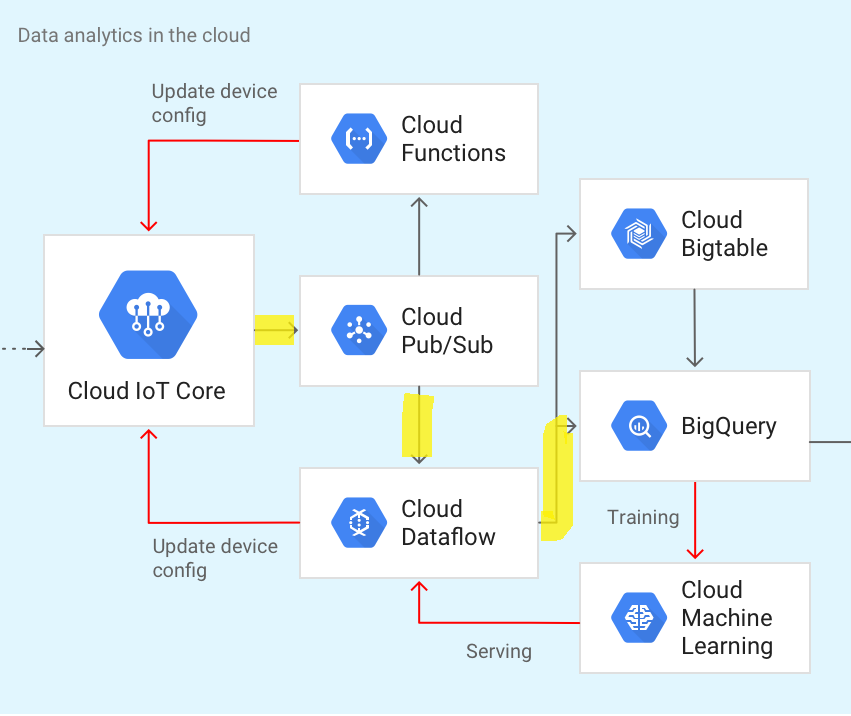

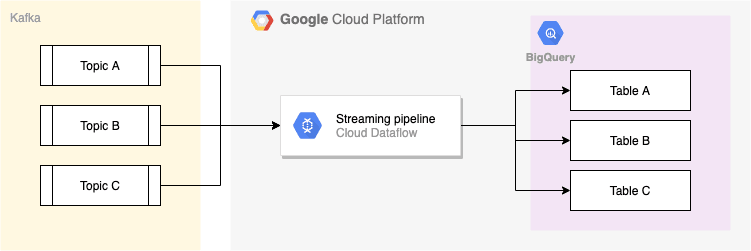

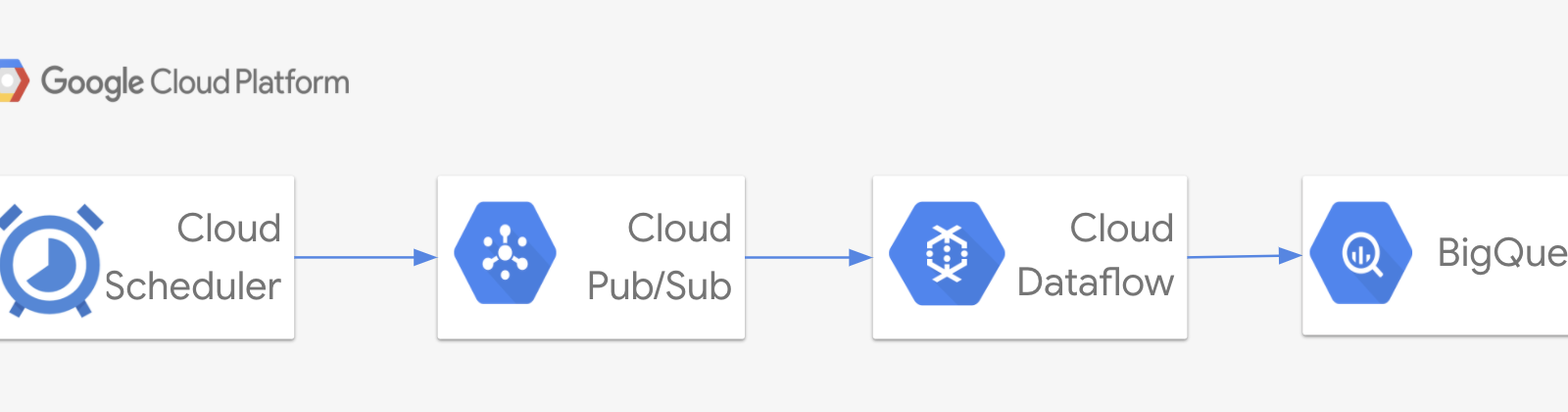

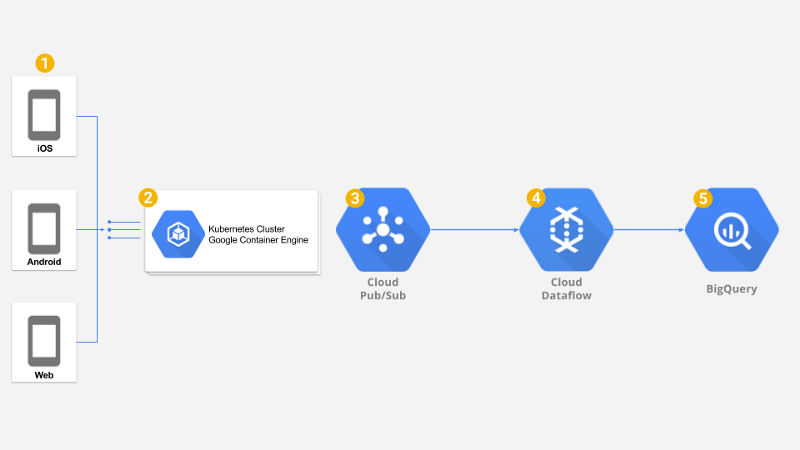

Google Cloud Dataflow to the rescue for data migration

This implies that each determinate process computes a from input streams to output streams, and that a network of determinate processes is itself determinate, thus computing a continuous function.

Because of that, it would be slow to migrate a large volume of data.

Thankfully, now we are using Google Cloud Dataflow to do so.

Dataflo

Dataflow manages the Data Lake configurations internally.

It is to ensure that they have the potential to help enable the form of predictive analytics, real-time personalization, and fraud detection.

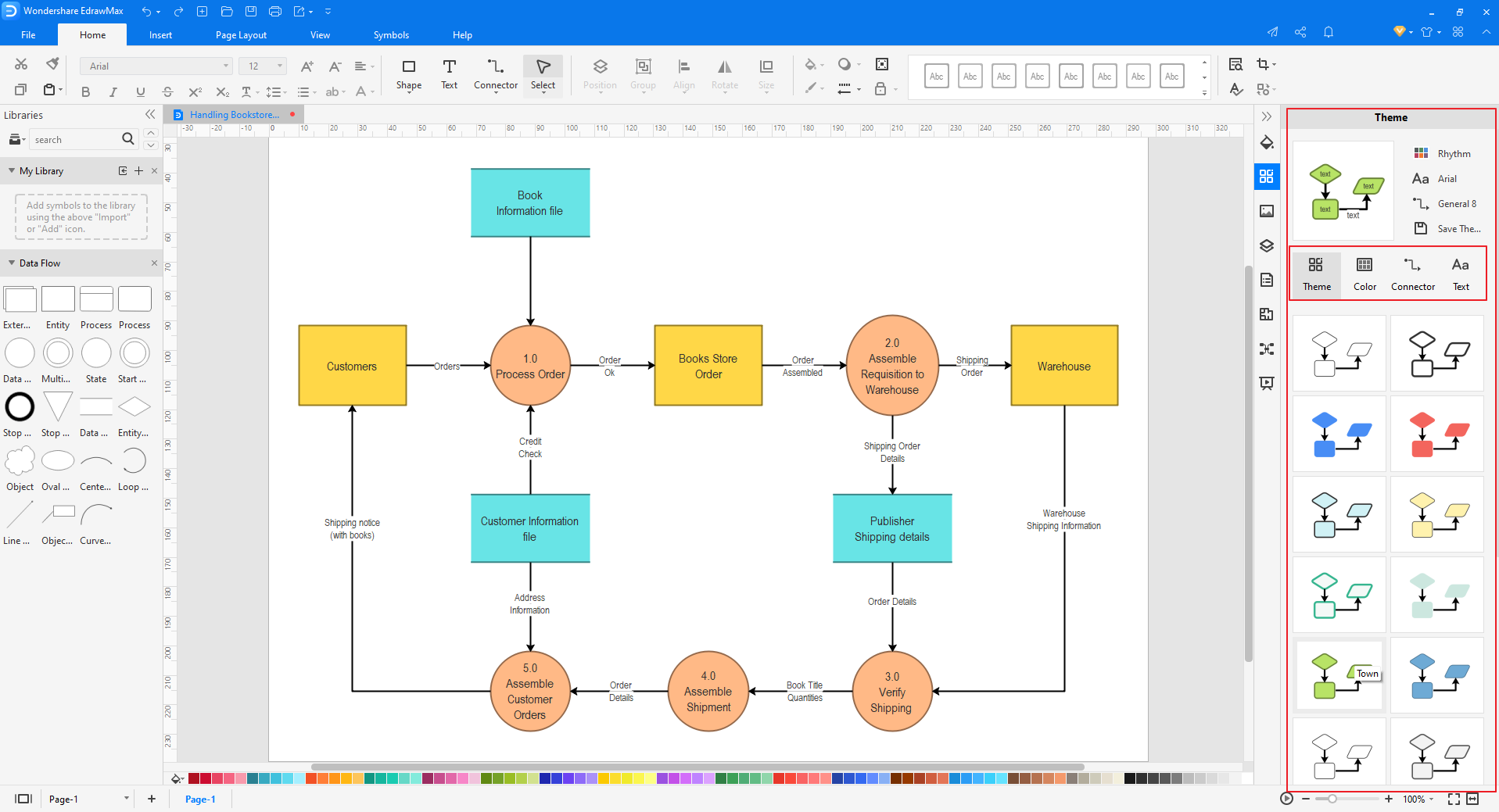

The following example first converts the words to lowercase and then computes the frequency of each word.

- Related articles

2022 www.conventioninnovations.com